Features

Blue indicates iGibson 2.0 features and red indicates iGibson 1.0 features.Object States

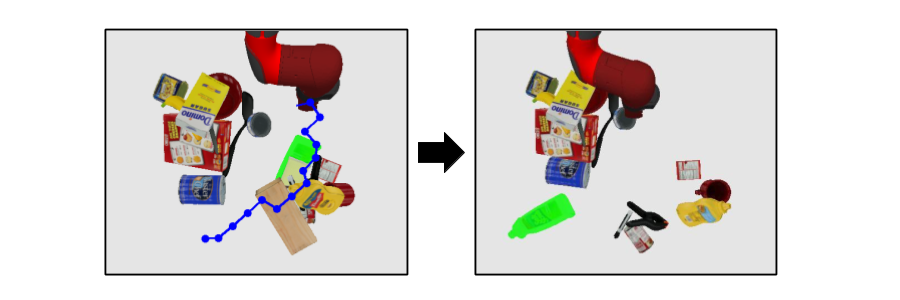

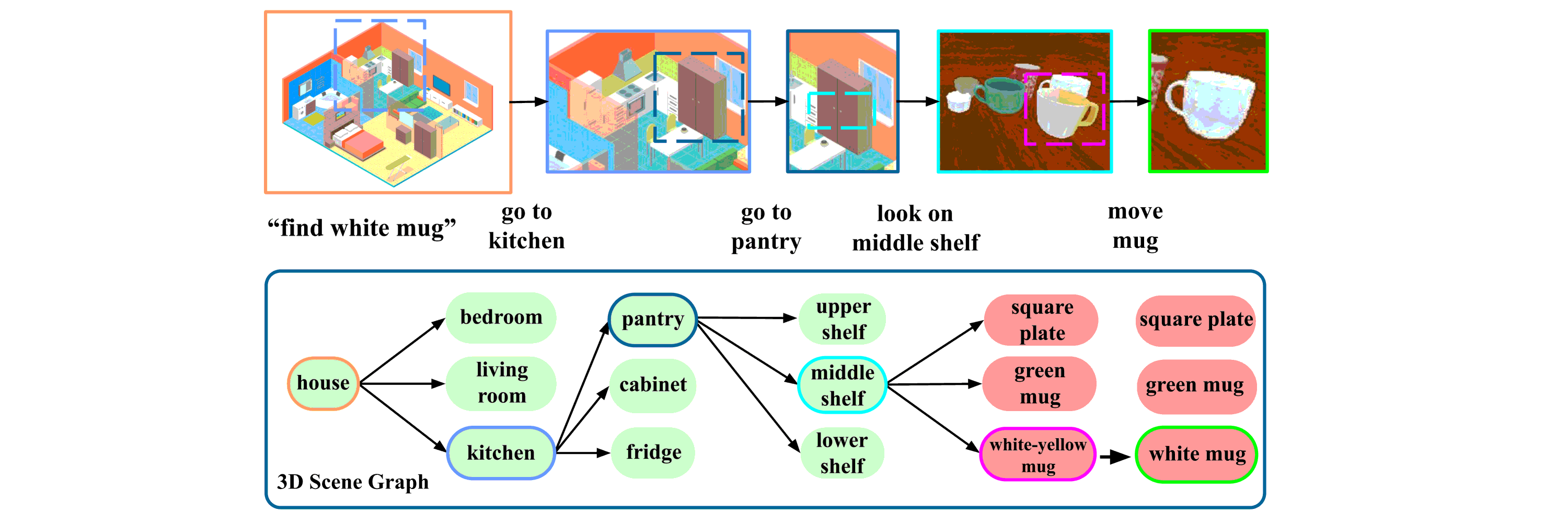

iGibson 2.0 supports object states, including temperature, wetness level, cleanliness level, and toggled and sliced states, necessary to cover a wider range of tasks.

Predicate Logic Functions

iGibson 2.0 implements a set of predicate logic functions that map the simulator states to logic states like Cooked or Soaked.

Generative System

Given a logic state, iGibson 2.0 can sample valid physical states that satisfy it. It can generate infinite instances of tasks with minimal human effort.

VR Interaface

iGibson 2.0 includes a virtual reality (VR) interface to immerse humans in its scenes to collect demonstrations.

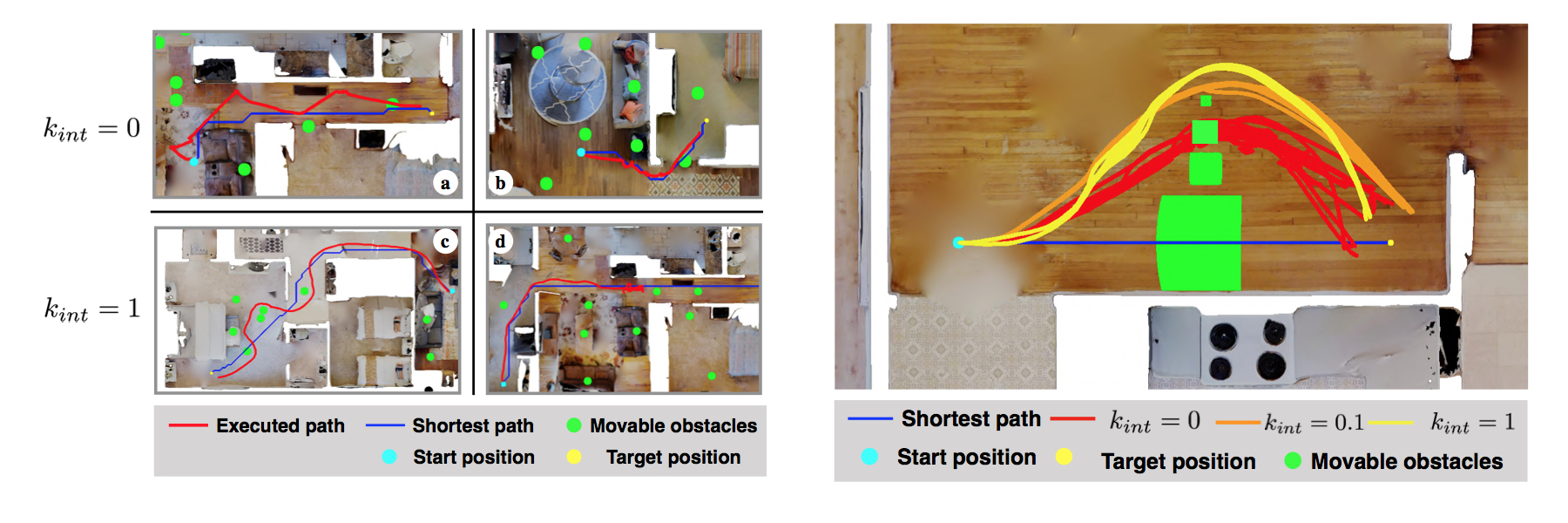

Interactive Scenes

We released iGibson dataset, which consists of 15 fully interactive scenes and 500+ object models.

Compatibility with Large Scene Datasets

Support for 12000+ scenes from CubiCasa5K and 3D-Front with full interaction. Backward compatibility GibsonV1's 572 buildings.

Robot Models

of the most common real robots and AI agents: Fetch and Freight, Husky, TurtleBot v2, Locobot, Minitaur, JackRabbot, a generic quadrocopter, Humanoid and Ant.

Physically Realistic Articulated Objects

Additional annotation of materials and dynamic properties on top of PartNet and Motion Dataset for more realistic simulation.

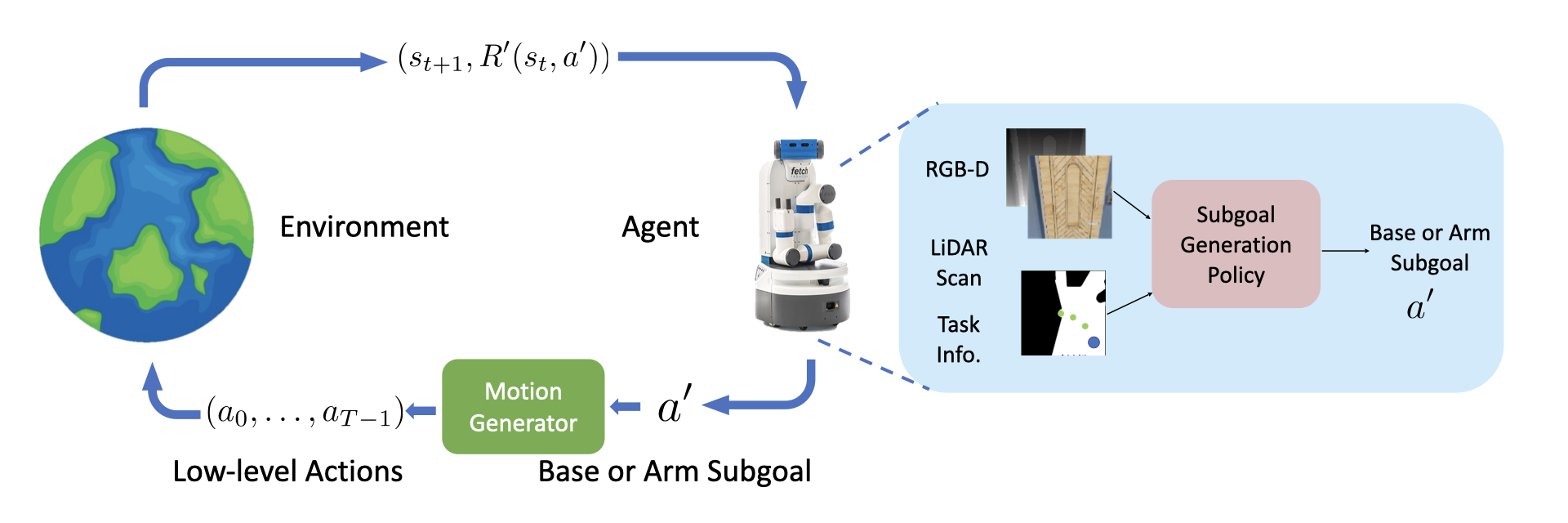

Fast Physics-Based Rendering and Physics

Achieving more than 100 fps with full-physics on fully-interactive scenes. More than 400 fps for rendering only.

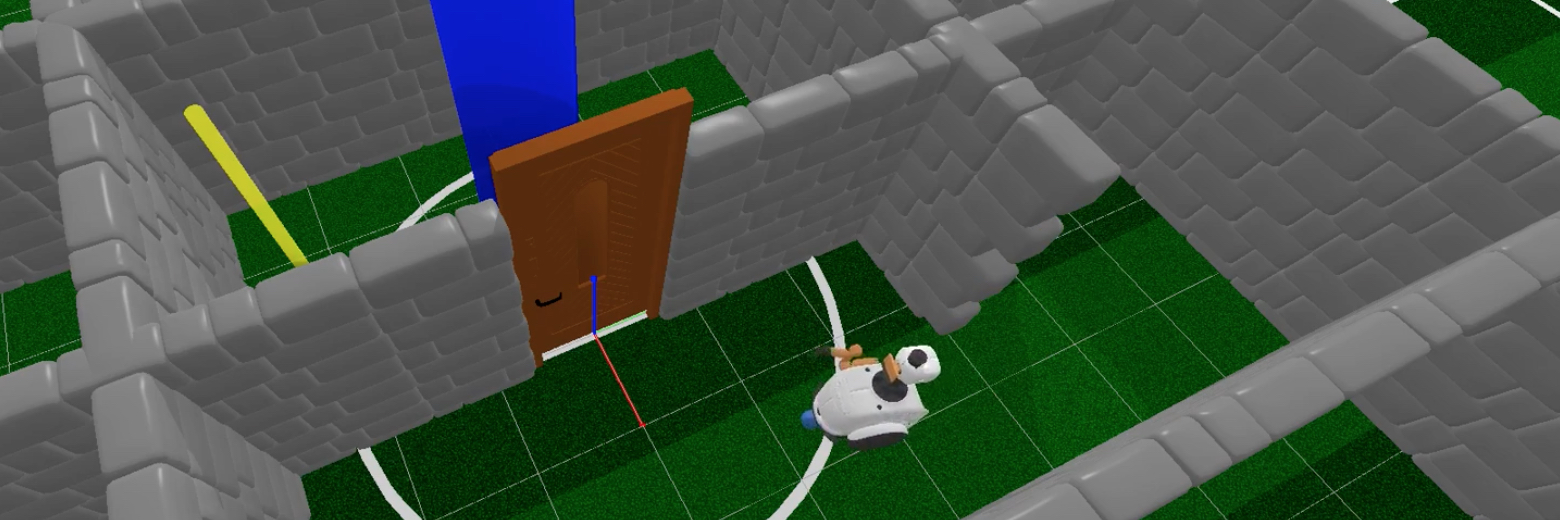

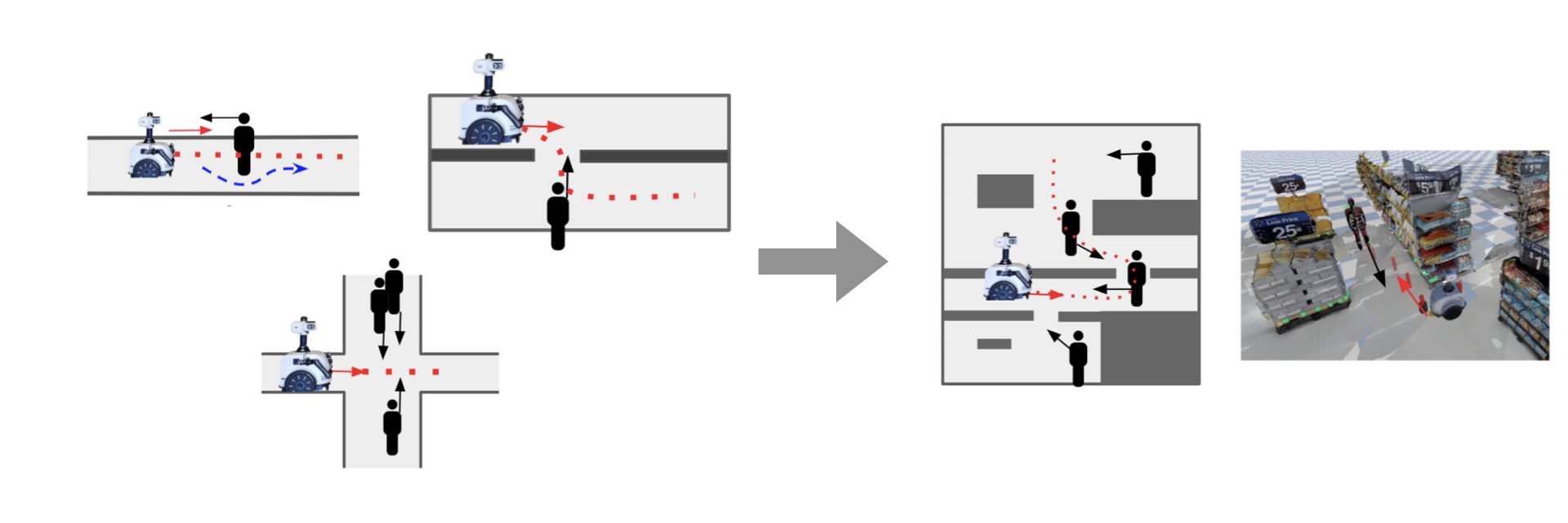

Motion Planning and Human-iG Interface

Built in motion planners (e.g. RRT, BiRRT, LazyPRM) for arm and base. Intuitive Human-iG interface for efficient demonstration collection.

Multi-Agent Support

to train in collaborative or adversarial setups, where an arbitrary number of agents can see and interact with each other.

Reinforcement Learning Baselines

Reinforcement learning starter code for navigation tasks with visual and LiDAR signals using SAC.

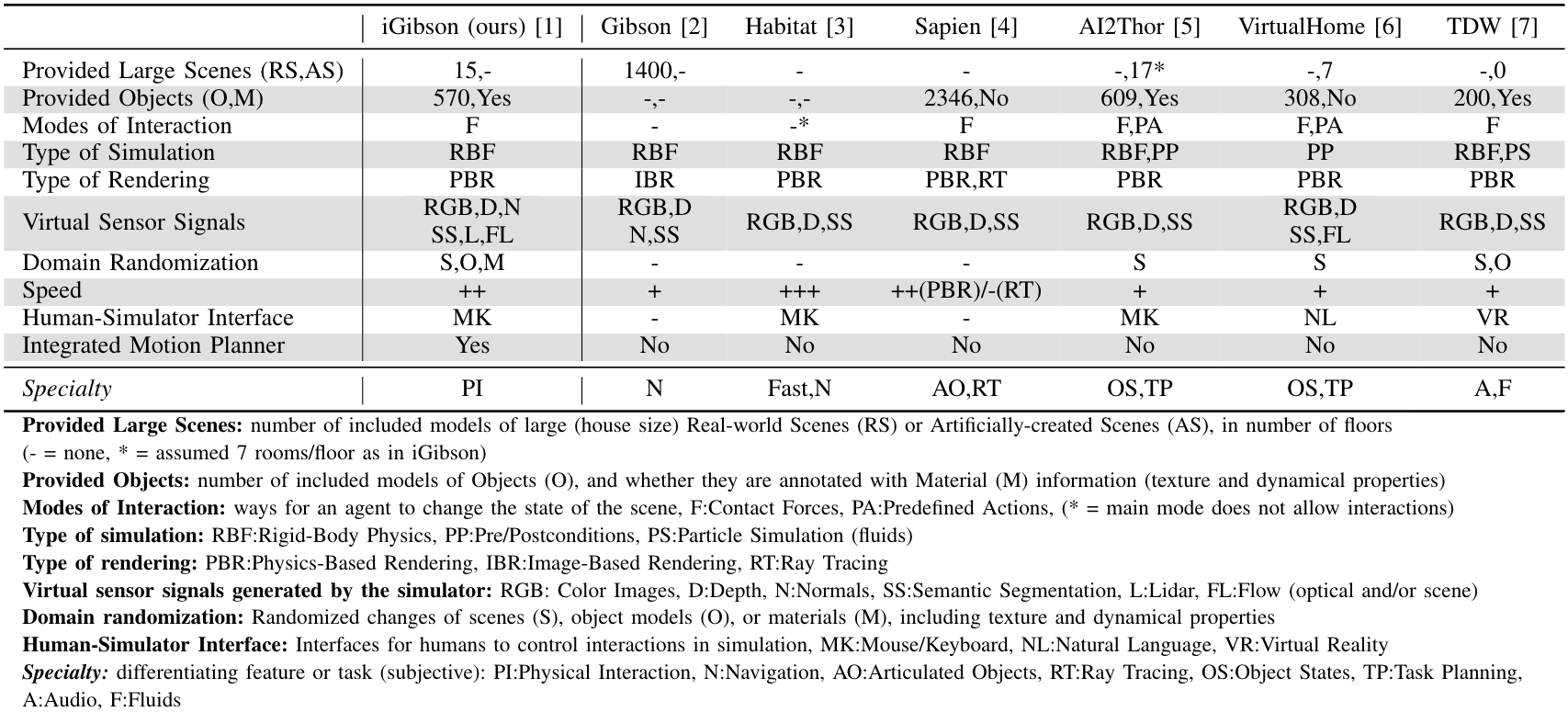

Comparison to other environments:

[iGibson, 1] [Gibson, 2] [Habitat, 3] [SAPIEN, 4] [AI2-THOR, 5] [VirtualHome, 6] [ThreeDWorld, 7]

Fei Xia

Fei Xia

Bokui (William) Shen

Bokui (William) Shen

Chengshu Li

Chengshu Li

Michael Lingelbach

Michael Lingelbach

Cem Gokmen

Cem Gokmen

Micael Tchapmi

Micael Tchapmi

Lyne Tchapmi

Lyne Tchapmi

Kent Vainio

Kent Vainio

Claudia Perez D'Arpino

Claudia Perez D'Arpino

Sanjana Srivastava

Sanjana Srivastava

Shyamal Buch

Shyamal Buch

Linxi (Jim) Fan

Linxi (Jim) Fan

Guanzhi Wang

Guanzhi Wang

Andrey Kurenkov

Andrey Kurenkov

Gokul Dharan

Gokul Dharan

Tanish Jain

Tanish Jain

Roberto Martín-Martín

Roberto Martín-Martín

Karen Liu

Karen Liu

Hyo Gweon

Hyo Gweon

Jiajun Wu

Jiajun Wu

Li Fei-Fei

Li Fei-Fei

Silvio Savarese

Silvio Savarese