Highlights of the Challenge

Highly Photorealistic Scenes

Full Interactivity

Pedestrian Simulation

Tasks

The iGibson Challenge 2022 uses the iGibson simulator [1] and is composed of two navigation tasks that represent important skills for autonomous visual navigation:

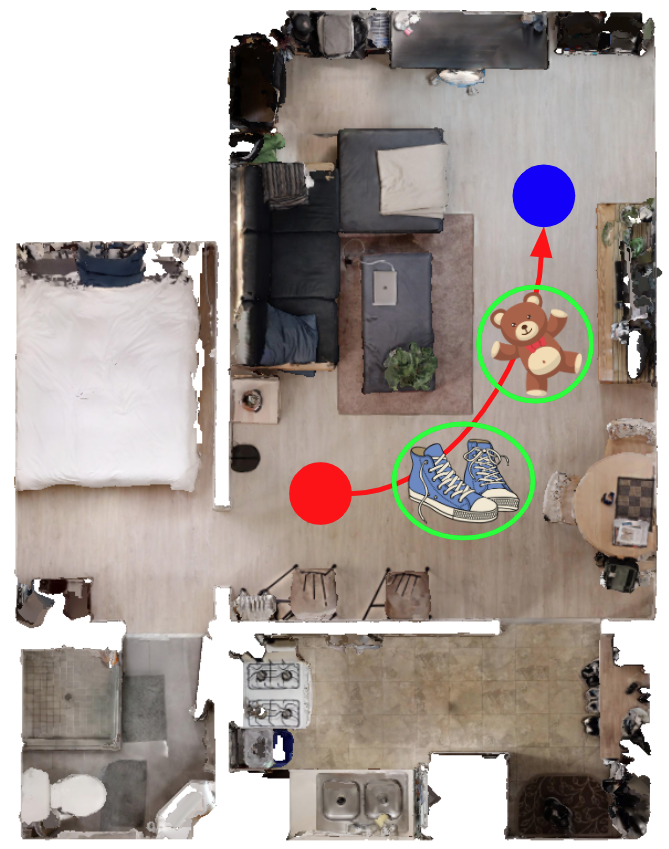

Interactive Navigation

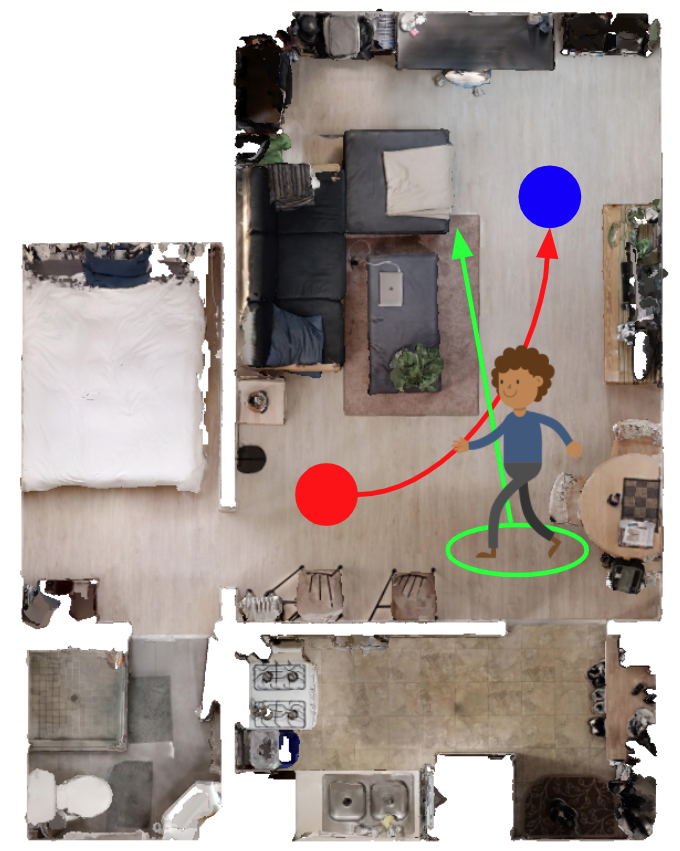

Social Navigation

- Interactive Navigation: the agent is required to reach a navigation goal specified by a coordinate (as in PointNav[2]) given visual information (RGB+D images). The agent is allowed (or even encouraged) to collide and interact with the environment in order to push obstacles away to clear the path. Note that all objects in our scenes are assigned realistic physical weight and fully interactable. However, as in the real world, while some objects are light and movable by the robot, others are not. Along with the furniture objects originally in the scenes, we also add additional objects (e.g. shoes and toys) from the Google Scanned Objects dataset to simulate real-world clutter. We will use Interactive Navigation Score (INS)[3] to evaluate agents' performance in this task.

- Social Navigation: the agent is required to navigate the goal specified by a coordinate while moving around pedestrians in the environment. Pedestrians in the scene move towards randomly sampled locations, and their movement is simulated using the social-forces model ORCA [4] integrated in iGibson [1], similar to the simulation enviroments in [5]. The agent shall avoid collisions or proximity to pedestrians beyond a threshold (distance <0.3 meter) to avoid episode termination. It should also maintain a comfortable distance to pedestrians (distance <0.5 meter), beyond which the score is penalized but episodes are not terminated. We will use the average of STL (Success weighted by Time Length) and PSC (Personal Space Compliance) to evaluate the agents' performance. More details can be found in the "Evaluation Metrics" section below.

References

[1] iGibson, a Simulation Environment for Interactive Tasks in Large Realistic Scenes.. Bokui Shen, Fei Xia, Chengshu Li, Roberto Martín-Martín, Linxi Fan, Guanzhi Wang, Shyamal Buch, Claudia D'Arpino, Sanjana Srivastava, Lyne P Tchapmi, Micael E Tchapmi, Kent Vainio, Li Fei-Fei, Silvio Savarese, 2020.

[2] On evaluation of embodied navigation agents. Peter Anderson, Angel Chang, Devendra Singh Chaplot, Alexey Dosovitskiy, Saurabh Gupta, Vladlen Koltun, Jana Kosecka, Jitendra Malik, Roozbeh Mottaghi, Manolis Savva, Amir R. Zamir. arXiv:1807.06757, 2018.

[3] Interactive Gibson Benchmark: A Benchmark for Interactive Navigation in Cluttered Environments. Fei Xia, William B. Shen, Chengshu Li, Priya Kasimbeg, Micael Tchapmi, Alexander Toshev, Roberto Martín-Martín, and Silvio Savarese. RA-L, to be presented at ICRA 2020.

[4] RVO2 Library: Reciprocal Collision Avoidance for Real-Time Multi-Agent Simulation. Jur van den Berg, Stephen J. Guy, Jamie Snape, Ming C. Lin, and Dinesh Manocha, 2011.

[5] Robot Navigation in Constrained Pedestrian Environments using Reinforcement Learning. Claudia Pérez-D'Arpino, Can Liu, Patrick Goebel, Roberto Martín-Martín and Silvio Savarese. ICRA 2021.

Evaluation Metrics

- Interactive Navigation: We will use Interactive Navigation Score (INS) as our evaluation metrics. INS is an average of Path Efficiency and Effort Efficiency. Path Efficiency is equivalent to SPL (Success weighted by Shortest Path). Effort Efficiency captures both the excess of displaced mass (kinematic effort) and applied force (dynamic effort) for interaction with objects. We argue that the agent needs to strike a healthy balance between taking a shorter path to the goal and causing less disturbance to the environment. More details can be found in our paper.

- Social Navigation: We will use the average of STL (Success weighted by Time Length) and PSC (Personal Space Compliance) as our evaluation metrics. STL is computed by success * (time_spent_by_ORCA_agent / time_spent_by_robot_agent). The second term is the number of timesteps that an oracle ORCA agent take to reach the same goal assigned to the robot. This value is clipped by 1. In the context of Social Navigation, we argue STL is more applicable than SPL because a robot agent can achieve perfect SPL by "waiting out" all pedestrians before it makes a move, which defeats the purpose of the task. PSC (Personal Space Compliance) is computed as the percentage of timesteps that the robot agent comply with the pedestrians' personal space (distance >= 0.5 meter). We argue that the agent needs to strike a heathy balance between taking a shorted time to reach the goal and incuring less personal space violation to the pedestrians.

Dataset

We provide 8 scenes reconstructed from real world apartments in total for training in iGibson. All objects in the scenes are assigned realistic weight and fully interactable. For interactive navigation, we also provide 20 additional small objects (e.g. shoes and toys) from the Google Scanned Objects dataset. For fairness, please only use these scenes and objects for training.

For evaluation, we have 2 unseen scenes in our dev split and 5 unseen scenes in our test split. We also use 10 unseen small objects (they will share the same object categories as the 20 training small objects, but they will be different object instances).

8 Training Scenes

Setup

We adopt the following task setup:

- Observation: (1) Goal position relative to the robot in polar coordinates, (2) current linear and angular velocities, (3) RGB+D images.

- Action: Desired normalized linear and angular velocity.

- Reward: We provide some basic reward functions for reaching goal and making progress. Feel free to create your own.

- Termination conditions: The episode termintes after 500 timesteps or the robot collides with any pedestrian in the Social Nav task.

The tech spec for the robot and the camera sensor can be found in our starter code TBA.

For Interactive Navigation, we place N additional small objects (e.g. toys, shoes) near the robot's shortest path to the goal (N is proportional to the path length). These objects are generally physically lighter than the objects originally in the scenes (e.g. tables, chairs).

For Social Navigation, we place M pedestrians randomly in the scenes that pursue their own random goals during the episode while respecting each other's personal space (M is proportional to the physical size of the scene). The pedestrians have the same maximum speed as the robot. They are aware of the robot so they won't walk straight into the robot. However, they also won't yield to the robot: if the robot moves straight towards the pedestrians, it will hit them and the episode will fail.

Phases

- Minival Phase: The purpose of this phase to make sure your policy can be successfully submitted and evaluated. Participants are expected to download our starter code TBA and submit a baseline policy, even a trivial one, to our evaluation server to verify their entire pipeline is correct.

- Dev Phase: This phase is split into Interactive Navigation and Social Navigation tasks. Participants are expected to submit their solutions to each of the tasks separately. You may use the exact same policy for both tasks if you want, but you still need to submit twice. The results will be evaluated on the dataset dev split and the leaderboard will be updated within 24 hours.

- Test Phase: This phase is also split into Interactive Navigation and Social Navigation. Participants are expected to submit a maximum of 5 solutions during the last 15 days of the challenge. The solutions will be evaluated on the dataset test split and the results will NOT be made available until the end of the challenge.

- Winner Demo Phase: To increase visibility, the best three entries of each task of our challenge will have the opportunity to showcase their solutions in live or recorded video format during CVPR2022! All the top runners will be able to highlight their solutions and findings to the CVPR audience. Feel free to check out our presentation and our participants' presentations from our challenge last year on YouTube.

Timeline

| Challenge Announced | February 15, 2022 |

| EvalAI Leaderboard Open, Minival and Dev Phase Starts | February 28, 2022 |

| Test Phase Starts | May 15, 2022 |

| Challenge Dev and Test Phase Ends | June 5, 2022 |

| Winner Demo | June 19, 2022 |

Participate

Do you want to participate? Download our starter package TBA with iGibson and and start developing your own solution right away! You also need to register on the EvalAI portal TBA.

FAQ

-

Q: Where should I watch out for any latest announcement about this challenge?

A: Please check out the EvalAI forum of our challenge TBA. We will make all of our announcements there. -

Q: Should I submit the same policy for both tasks? Or should I submit different policies for each of

them?

A: You should submit your policy for the two tasks separately on EvalAI portal TBA. They are in two concurrent phases: Interactive Navigation and Social Navigation. In other words, you should submit twice. However, feel free to use the same policy and checkpoint for both tasks if you want. -

Q: Where can I find the tech spec for the real robot and sensor?

A: You can find the tech spec for the robot here and for the sensor Intel® RealSense? D435 here. We have also summarized these information into a easy-to-read table in our starter code TBA. -

Q: What is the robot's action space?

A: We believe that the best action space for natural and efficient navigation is continuous robot velocities (linear and angular). This will be our default action space. However, some participants from our last year's challenge has successfully trained policies using discrete action space (e.g. forward, turn left, turn right) and then implemented a simple converter that converts the discrete actions to robot velocities. -

Q: What if I have questions that are not answered in FAQ?

A: You are highly encouraged to post your question on the EvalAI forum of our challenge TBA. Our answers to your questions can help others as well. If you absolutely prefer to ask questions privately, you can email us at chengshu@stanford.edu and jaewooj@stanford.edu.

Organizers

The iGibson Challenge 2022 is organized by the Stanford Vision and Learning Lab and Robotics at Google:

Chengshu Li

Chengshu Li

Jaewoo Jang

Jaewoo Jang

Fei Xia

Fei Xia

Roberto Martín-Martín

Roberto Martín-Martín

Claudia D'Arpino

Claudia D'Arpino

Alexander Toshev

Alexander Toshev

Anthony Francis

Anthony Francis

Edward Lee

Edward Lee

Silvio Savarese

Silvio Savarese