About

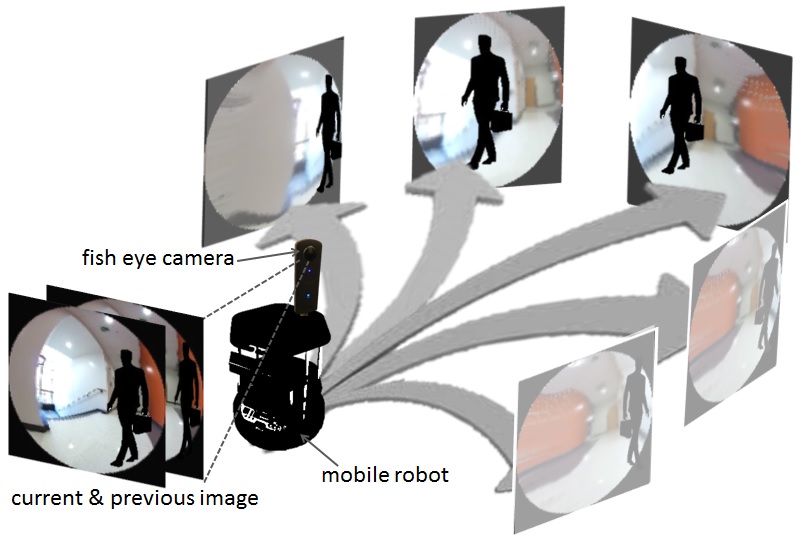

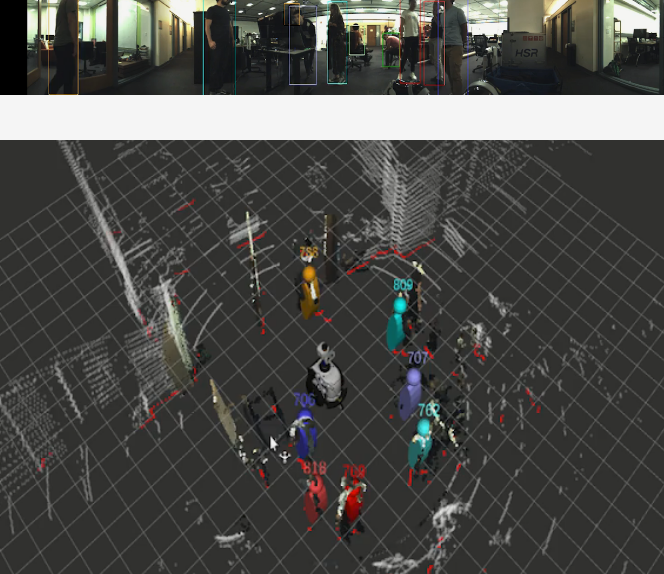

Humans have the innate ability to "read" one another. When people walk in a crowed public space such as a sidewalk, an airport terminal, or a shopping mall, they obey a large number of (unwritten) common sense rules and comply with social conventions. For instance, as they consider where to move next, they respect personal space and yield right-of-way. The ability to model these “rules” and use them to understand and predict human motion in complex real world environments is extremely valuable for the next generation of social robots.

Our work at the CVGL is making practical a new generation of autonomous agents that can operate safely alongside humans in dynamic crowded environments such as terminals, malls, or campuses. This enhanced level of proficiency opens up a broad new range of applications where robots can replace or augment human efforts. One class of tasks now susceptible to automation is the delivery of small items – such as purchased goods, mail, food, tools and documents – via spaces normally reserved for pedestrians.

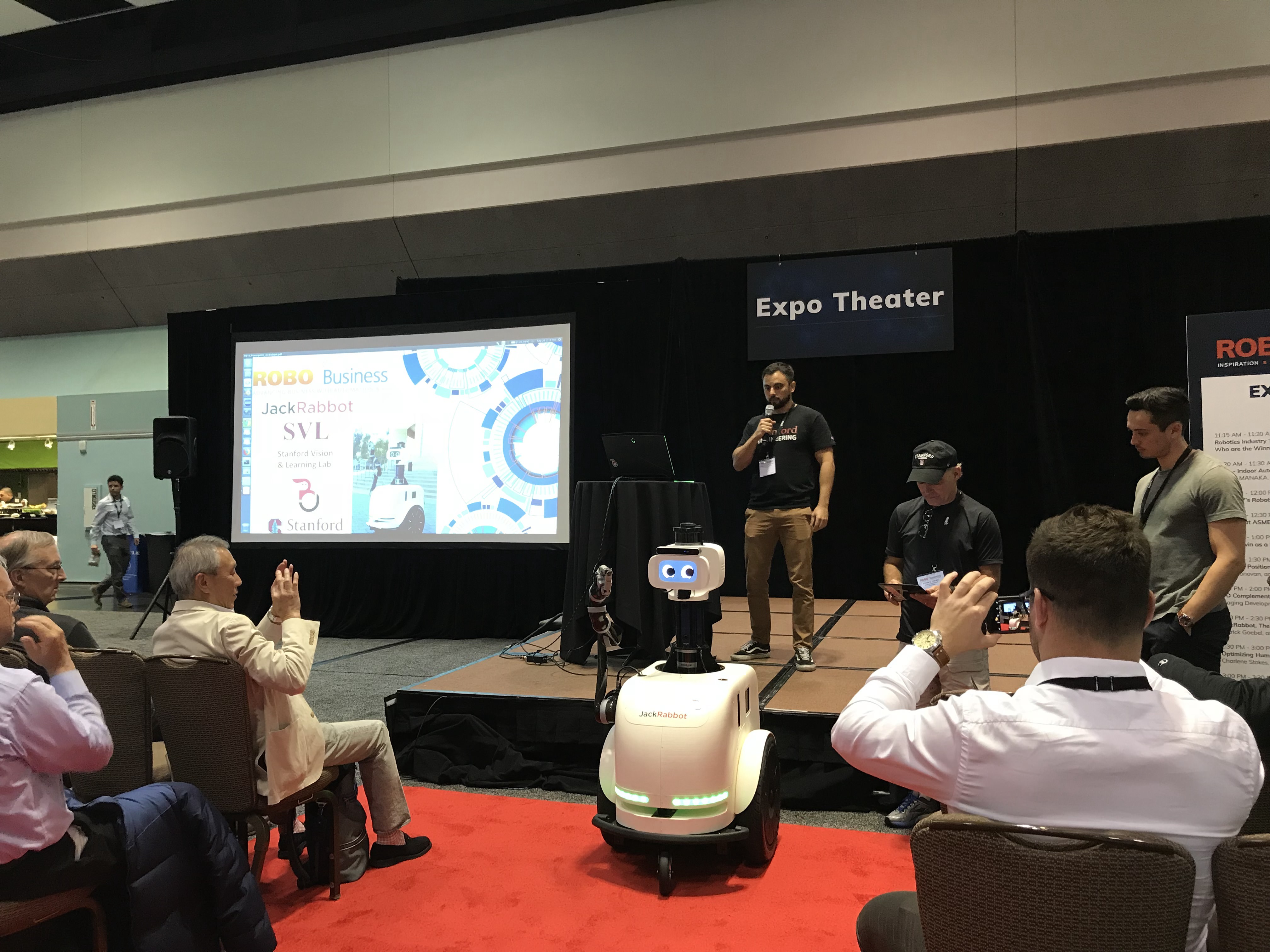

In this project, we are exploring this opportunity by developing a demonstration platform to make deliveries locally within the Stanford campus. The Stanford “Jackrabbot”, which takes it name from the nimble yet shy Jackrabbit, is a self-navigating automated electric delivery cart capable of carrying small payloads. In contrast to autonomous cars, which operate on streets and highways, the Jackrabbot is designed to operate in pedestrian spaces, at a maximum speed of five miles per hour.